What is text-to-creation? Text-to-creation refers to the category of technologies that takes text as an input, and produces multimedia that span text, audio, images, or video as an output.

– Sandy Diao

Text is the universal form of creation

This statement may not be immediately obvious, but it is perhaps the most logical explanation for the widespread consumer adoption of LLM (large language model)-powered experiences that we are seeing today. As of writing, OpenAI has publicly disclosed that their ChatGPT technology has well over 100 million monthly active users. One third of Gen Z, millennials, and Gen X have tried generative AI technologies (Statistica 2023). The market for AI has grown to $6.6 billion this year, and is expected to triple to $21 billion by the end of the decade (Statistica 2023). It’s important to note that we are only scratching the surface with these numbers when measuring the impact of AI-only tools. When we consider the possibilities of using AI to supercharge industries such as pharmaceuticals ($1.4 trillion) or entertainment ($717 billion), the numbers become truly incomprehensible. So now, the question we all naturally want to understand is what happens from here and what opportunities lie ahead? To answer this question, we must look at how we got here to understand how our behaviors will evolve next.

Text is the primary medium of daily communication

To provide context on how I’ve developed my point of view, I’ve worked behind the scenes to launch several AI products. During my time at Indiegogo, I helped hundreds of founders launch hardware products that incorporated early versions of edge computing on consumer devices, such as security cameras and emotional companion robots. I’ve also worked at Pinterest and Meta, whose advertising platforms allowed end-users to access AI ad delivery through machine-learned audience models for conversion optimization. Currently, I work on growth at Descript, where we’ve built a text-based video editing software powered by generative AI models across transcription, voice synthesis, audio generation, and more.

At first glance, Descript may seem like a surface-level UX change to a video editor. However, when you try to understand why it works, you’ll start to realize that it’s not just a coincidence that Descript is as easily learned and accepted by content creators and businesses as it is. Beyond Descript, the common theme across all types of creation is that we create with text to communicate. Most communication happens asynchronously, and the way we do that is via text – handwritten, emailed, scripted, documented, in blog posts, books, engravings, and the list goes on and on.

When you layer on what’s happening in the landscape of adoption of ChatGPT and consider the incredible versatility and historical importance of the written language, it becomes clear that text is truly the foundation upon which our modern forms of communication are built. When we consider the incredible versatility and historical importance of written language, and layer on what’s happening in the landscape of ChatGPT adoption, it becomes clear that text is truly the foundation upon which our modern world is built. Text-based prompts through a chat interface are the initial form factor that allows us to talk to computers in a way that we’ve never been able to before.

Text can be used to create more than just text

In addition to text-based prompts that generate text-based outcomes, there are many other forms of text-to-creation that are becoming prevalent today. For instance, text-to-speech tools based on transcription models are being utilized in everything from virtual assistants and smart home devices to audiobooks and podcasts.

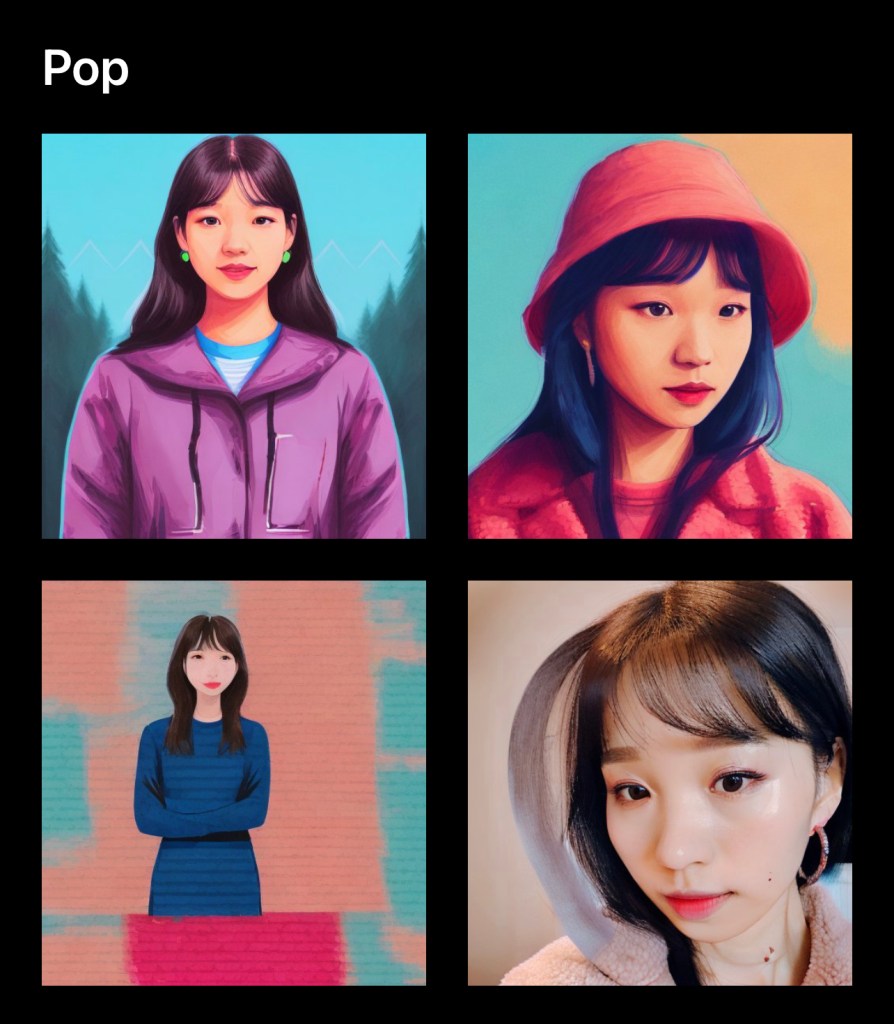

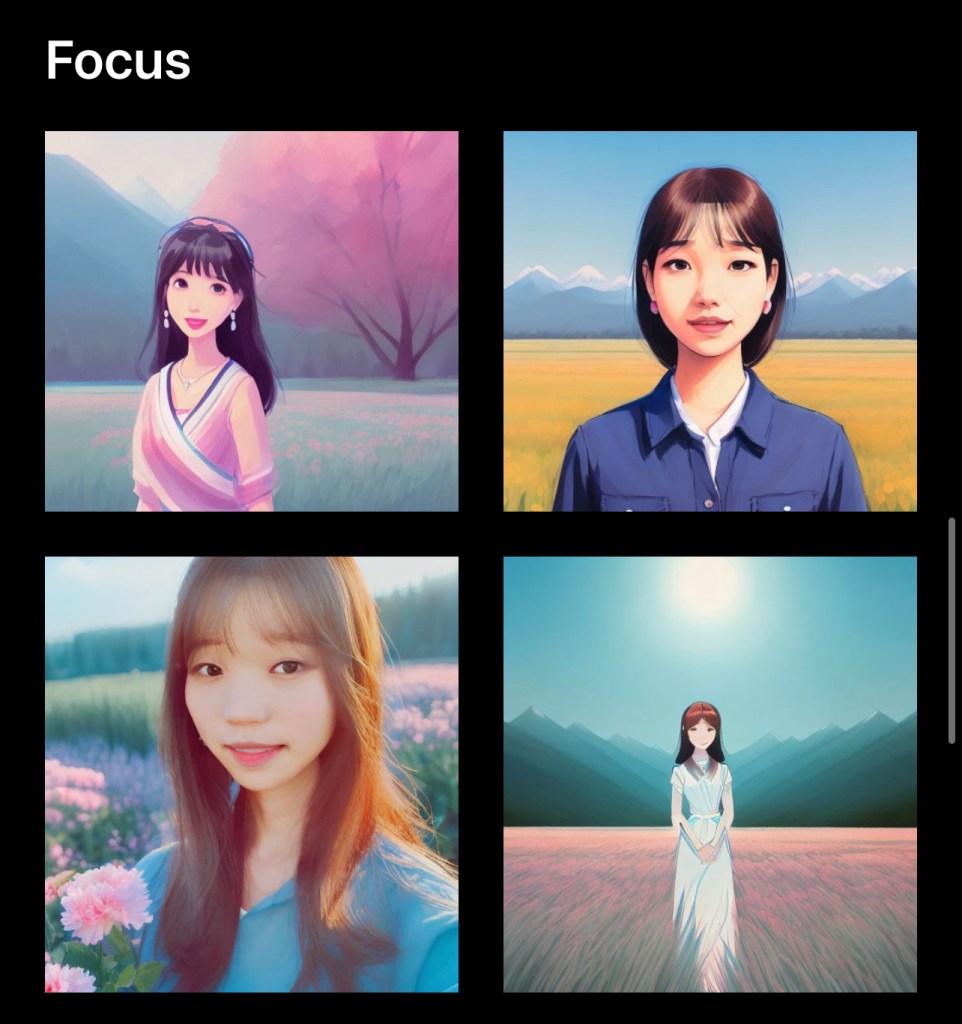

Text-to-image technology is also on the rise and has the potential to revolutionize everything from advertising and marketing to film and television. This technology can produce realistic, high-quality images from text descriptions, eliminating the need for expensive photo shoots and video productions, making it easier and more affordable for creators to bring their visions to life.

Lastly, text-to-expanded text creation is another area of development that could become even more powerful. As artificial intelligence and machine learning continue to improve, we may soon be able to create prompts with minimal context that are fine-tuned to create results indistinguishable from the work of humans. Imagine being able to write a book in precisely your own style and intent by providing just a log line. This could have far-reaching implications for industries such as journalism, advertising, and content creation, fundamentally altering the way we produce and consume written content.

The emergence of text-to-creation was inevitable

Considering text as the medium of creation throughout history makes a lot of sense. When thinking about the earliest forms of writing, one might envision cave paintings and hieroglyphics from history classes. Fast forward to the computing age, where humans use text to communicate, record, and create through typing. The written word remains the foundation of stories, systems of belief, and education, spanning from religious texts and philosophical treatises to novels and scientific papers.

In recent times, new forms of text-based creativity have emerged. The novel, which rose to prominence in the 18th and 19th centuries, marked a significant departure from traditional forms of storytelling and introduced new techniques for character development and plot construction. In the 20th century, the internet and digital technologies opened up new avenues for text creation, including blogs, social media, e-books, and online journalism.

Today, we continue to tell stories and share information primarily through text, even as we create short and long-form videos, explainer videos on YouTube, and self-created and hosted curricula across over 600 million blogs as of this year (Web Tribunal 2023). In many cases, even videos and movies originate as scripts. And while not all videos require manual text tagging, it’s worth considering the effort required to classify the topics and information in those that do.

The ideas that are expressed in written mediums originate in the minds of writers and creators before they are put onto paper. In order to effectively share and communicate these ideas with others, the text needs to be well-crafted and nearly finalized. Recently, LLMs have given us the ability to communicate sequentially and iteratively, much like natural language conversations. However, just as there are good and bad conversations based on the context provided and the clarity of instructions, there are also more and less effective ways to prompt an LLM. The effectiveness of text-based communication depends on the communicator’s abilities. In this new world of accessible LLMs, it is important to learn how to turn our thoughts into clear communication using natural language.

Now, tools like Descript enable users to create a custom voice model with just a few minutes of training data. In the future, some of these models could be instant and hidden within app experiences. Completing a computer task could be as easy as speaking to it in natural language and asking it to perform a task.

The emerging role of natural language speech

An important development in this area will be enabling speech, which is the most common form of natural language delivery today, to be converted into text so that we can use it for iterative creation. Significant progress has already been made in converting human speech to text through speech-to-text transcription models, such as Whisper, Google Cloud’s Speech-to-text, and Amazon Transcribe. Let’s take a look at the most important moments in the evolution of digitizing natural language speech by decade:

- 1950s: One of the earliest examples of text-to-speech technology can be traced back to Bell Labs, who introduced the Audrey system. While the technology was still in its infancy, it laid the groundwork for the development of modern text-to-speech systems.

- 1960s: Physicist John Larry Kelly, Jr used an IBM 704 computer to synthesize speech.

- 1970s: Itakura developed the line spectral pairs method. MUSA, a stand-alone speech synthesis algorithm used to read Italian out loud, was also released during this year.

- 1980s: Sun Electronics released Stratovox, a shooting-style arcade game that shook the video game world. DECtalk, the standalone acoustic-mechanical speech machine, was built, and Steve Jobs created NeXT, a system that was developed by Trillium Sound Research.

- 1990s: Ann Syrdal at AT&T Bell Laboratories developed a female speech synthesizer voice. Engineers worked to make voices more natural-sounding.

- 2000s: Quality and standards for synthesized speech became a working issue for developers. This coalesced in researchers using a common speech dataset along with deeper research into additional vectors of creation, such as emotions.

- 2010s: OpenAI released GPT-1, the first iteration of their generative language model. Researchers at the University of North Carolina at Chapel Hill introduced the AttnGAN model, which uses attention mechanisms to generate images from text descriptions. Since then, there have been numerous advancements in this field, with companies like OpenAI and NVIDIA developing their own image-generating models.

- 2020s: Open AI has continued to refine and develop the technology, culminating in the release of GPT-3 in 2020. With the ability to generate human-like text, GPT-3 has opened up new possibilities for text-to-text creation. In 2022, Open AI released ChatGPT as a public beta, and then released an updated model in 2023 that takes image inputs.

Voice-to-creation is also text-based creation

Before we draw the firm conclusion that text underlies universal creation, we need to consider how voice-based creation fits into the picture. In the past few years, we have used our voices to activate AI assistants on devices powered by Alexa and Siri. In a 2018 interview with Vox, tech entrepreneur and investor Marc Andreessen predicted that “voice is going to be the primary user interface for the majority of computing over the next 10 years.” This prediction may still come true, but for that to happen, we need to understand that the engine powering voice interfaces is still based on text-to-speech models.

Voice-enabled technologies such as virtual assistants, like Amazon’s Alexa or Apple’s Siri, rely on text-to-speech models to convert spoken language into written language. This written language is then processed by natural language processing algorithms that help the technology understand and respond to the user’s request. Even the most advanced virtual assistants on the market, including Google Assistant and Amazon’s Alexa, are powered by text-to-speech models that are trained on vast amounts of written text. This is also true for other forms of media creation – the intermediate step is almost always text-to-creation at this time.

What happens next?

So, how do we evolve with text-to-creation from here? There are two key areas that will be innovated in in order to supercharge our ability to create with text:

- More context as inputs, such as voice, vision, and motion. Relying solely on text can limit a model’s ability to infer all necessary context. While many models already support image inputs, we also need to incorporate video and other forms of real-world capture to enhance the results of our text-based prompts, and to extend into more creative fields that require understanding the nuances of emotion, intent, and preferences.

- Edge computing will allow models to tap into individually unique and private data inputs that fine tune models to generate powerrful outputs that we may have never imagined.

While we can’t predict exactly how text creation will evolve in the future, it is clear that new technologies will inevitably be created to capture more context and compute with more personalized sources of data. There’s a universe of outputs that power use cases that we probably can’t even imagine yet, and I’m excited to see what those are.